JavaScript Augmented Reality

Posted by Alistair Macdonald

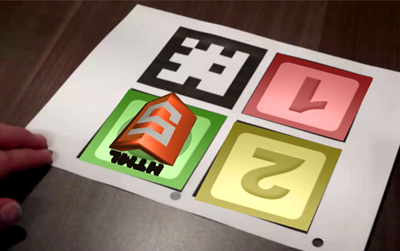

Augmented Reality: using JSARToolkit with WebGL & HTML5 Video

Last week I spent some time running tests and generally familiarizing myself with the JSARToolkit code. I also built a JavaScript wrapper for the library to make it easier to use.

JSARToolkit is a JavaScript library converted from FLARToolkit (Flash), and is developed for tracking AR Markers in video footage. The ARToolkit converts the data from the markers into 3D coordinates with-which you can super-impose images and other 3D or 2D content.

What is Augmented Reality?

“Augmented reality (AR) is a term for a live direct or an indirect view of a physical, real-world environment whose elements are augmented by computer-generated sensory input, such as sound or graphics. It is related to a more general concept called mediated reality, in which a view of reality is modified (possibly even diminished rather than augmented) by a computer. As a result, the technology functions by enhancing one’s current perception of reality. By contrast, virtual reality replaces the real-world with a simulated one.

– Wikipedia

You may have already seen JSARToolkit in action on Ilmari Heikkinen’s awesome “Remixing Reality” demo. Ilmari’s demo is part of Mozilla’s “Web O’ Wonder”, a site showcasing some of the new technology being released in Firefox 4. Props to llmari for an inspiring demo!

Resources:

You will need the latest version of Firefox 4 or Chrome to see these demos working.

HTML5 Music-Video Research

We were asked by a client to evaluate the feasibility of using JSARToolkit for an online HTML5-infused music video. (We were only asked to consider users that were using the latest version of Firefox and Chrome.) Some of the questions we wanted to answer were:

- Would the processing be fast enough for slower machines?

- How many AR markers can we track at once?

- How fast can you move the marker before it becomes un-track-able?

- What is the maximum distance at which the camera can track?

Answers to these questions can be found below.

Recording Video

Flip Ultra HD: video camera

To record some test video I used a Flip Ultra HD video camera. While the quality on the Flip Ultra HD is pretty good, it’s obviously not a production-level camera. We knew that the results we came up with the Flip would be a worst case scenario. The main problem we found with such a low-grade video device was it’s inability to switch the shutter speed.

This meant there was absolutely nothing we could do about the blurring of the AR Trackers when moving too fast. We were surprised to see how quickly we lost the ability to track a marker when moving from side-to-side. However, we are very confident that shooting in a well-lit studio with a high-shutter speed, there would be very few un-track-able frames.

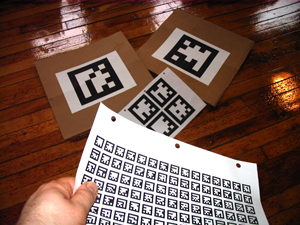

Printing The AR Markers

AR Markers: printed markers for HTML5 video tracking

I printed out some AR Markers that came with the JSARToolkit and began filming basic tests on the kitchen counter. I did not expect things to work first try, but I kept throwing video at the library and almost everything seemed to work.

The results were somewhat jumpy in places, but I have to reiterate, the quality of our camera is extremely poor compared to a production camera. We were also tracking the markers without any calibration for lens distortion, something that can add a significant amount of accuracy to the tracking.

Trans-coding Video to VP8 WebM

Blured Markers: impossible to track

The videos were recorded on the Flip using H.264 Mpeg format. Working with HTML5 video, we needed to convert our videos to WebM. The first encoder I tried was Ffmpeg2Theora, which despite it’s name does encode WebM videos. However, I found that Ffmpeg2Theora to be rather troublesome. When I encoded videos in Linux, sometimes they would not play in Windows and vice-versa. I am constantly installing and un-installing video codecs on my machines, so I hope that my problems are not ones experienced by everybody trying to encode HTML5-ready video.

After a bit of testing, I settled with Miro Video Converter for all my trans-coding. Unfortunately Miro has no batch processing, but the actual encoder itself seemed to produce content that worked very well across all the browsers and platforms I tried.

Building A Wrapper

I wanted to have a quick go at writing a re-usable API for JSARToolkit, something simple that could be plugged in as part of another JavaScript library like Popcorn.js. The code I found in Ilmari’s demo was tailored very specifically for the task at hand. With little in the way of comments, I found it difficult to understand what was going on without a lot of experimentation. I came up with a simple way to wrap the existing library.

The first step is to using the JSARToolkit Wrapper is initiating your tracker. This can be done like so:

Once the tracker has been created, the next step is to add some content to the markers. Here we are adding a static image, then a 3D object exported from Blender3D:

This snippet of code below demonstrates how to update the properties of the marker after it has been created:

You can also add more complex behavior using the JSARToolkit-Wrapper. The following code demonstrates how to update properties in real time. This code makes the first marker spin and pulse:

To access and manipulate the video of the tracker, you can do something like this:

Answers to Questions

![]()

Tracking 100 Markers: BOOM!

Would the processing be fast enough for slower machines?

Processing the video to find and track the markers is actually very fast. I notice very little difference in the actual tracking-time between one marker and one-hundred markers. The bulk of the work being done seems to be the process of super-imposing new content over the video.

How many AR markers can we track at once?

I tracked 100 augmented reality markers simultaneously in JavaScript without any problems.

How fast can you move the marker before it becomes un-track-able?

This all depends on your particular video camera and how you film the footage. If you use high shutter speeds like those used when recording sports games, there will be very little (if any) blurring and markers should track very well.

What is the maximum distance at which the camera can track?

Again this also depends on a few factors. Namely, speed-of-movement and lighting. In a somewhat-well-lit room (not a video studio), I was able to track AR Markers reliably up to 35ft at away from the lens at 720p resolution. The higher-resolution you track at, the better results you will see. I tested the algorithm using a 2D canvas, moving the marker patterns around quickly (without any blurring) and it tracked perfectly. It’s seems remarkably accurate if there’s no blurring of the source footage. One thing that is worth mentioning, is that you can film and track your video at 1080p (and probably higher), then you could cache the tracking results in JavaScript, and reduce the amount of processing on the client side. This way you can use accurate tracking data from hi-res video sources, and continue to use a small compressed output for your final web-video production.

Tip: Adjust the “ratio” if you find things are not tracking properly or the “threshold” if the lighting is poor.

Conclusions

Pros

- Simple to implement.

- Tracking algorithm not too CPU intensive.

- Can track at least 100 AR Markers at once.

- Can export directly from Blender3D.

- Can overlay any content, images, video, 3D objects, etc.

Cons

- Too many global variables in FLARToolkit conversion code.

- Blender3D export takes some tweaking to get some 3D objects to appear.

- No support for multiple simultaneous video sources in FLARToolkit conversion code.

- Lots of expensive getElementById() calls inside of frame-handlers.

Overall

There is definitely more work to be done if you wanted to use this code in a production environment or make it part of a larger library. But after a lot of testing and experimentation, I would say the code works surprisingly well! It is certainly feasible to do this kind of work with real-time with JavaScript if you have the ability to target a specific demographic.